The Hidden Problem with AI Optimization and Sampling

Why AI systems favor average results over the best ones, and how robust reinforcement learning improves real-world performance.

Resources

Why AI systems favor average results over the best ones, and how robust reinforcement learning improves real-world performance.

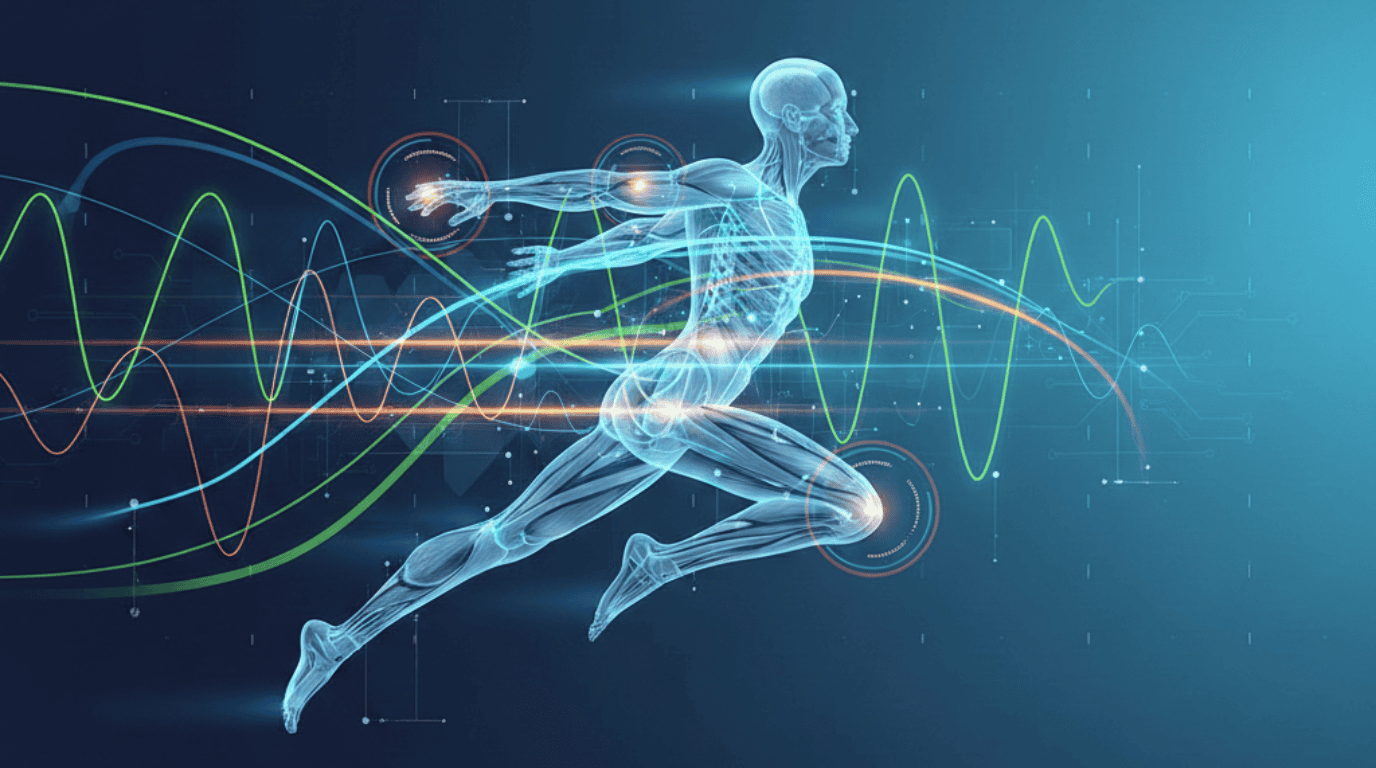

How kinematics-based motion analysis improves data labeling, automated quality control, and computer vision models for fitness and robotics.

Physical AI starts with data, not models. Learn how ontologies and context drive smarter, real-world AI systems.

AI systems can fail due to hidden blind spots. Learn how enterprises detect edge cases and structural gaps before deployment.

Trace datasets reveal how AI agents behave and enable automated agentic AI evaluation for reliability, safety, and compliance.

How kinematics-based motion analysis improves data labeling, automated quality control, and computer vision models for fitness and robotics.

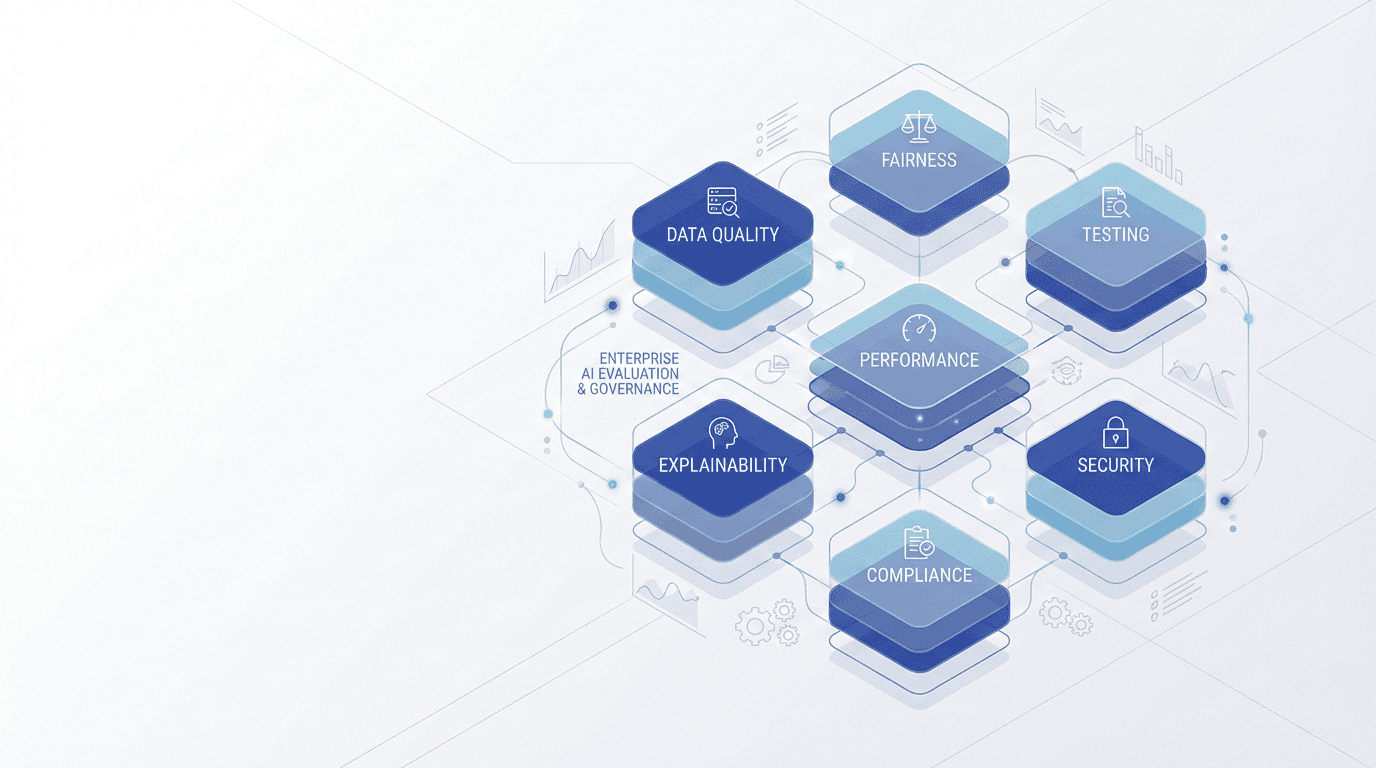

AI evaluation helps enterprises ensure accuracy, fairness, security, and trust. Learn the seven core components needed to keep AI reliable over time.

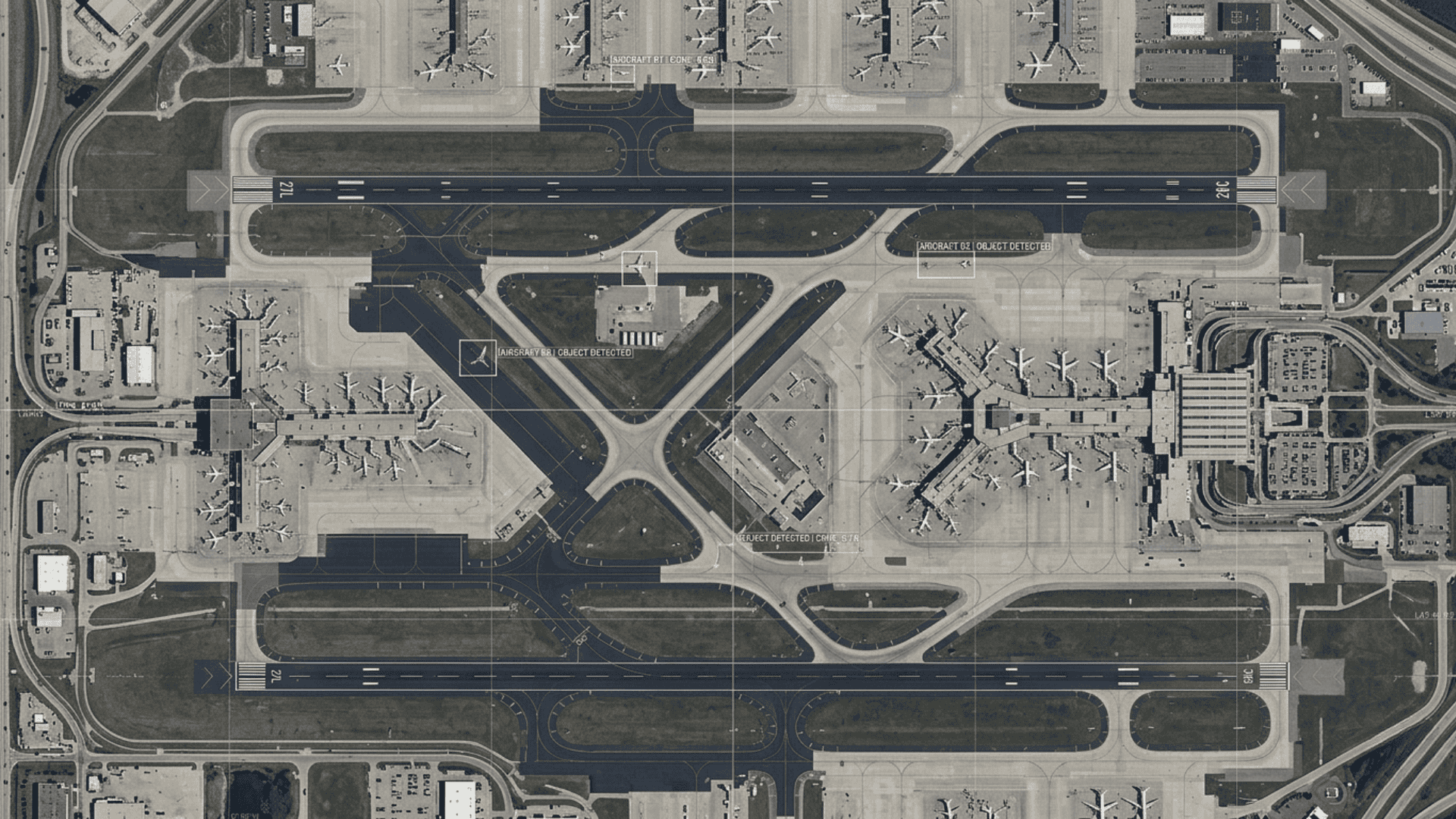

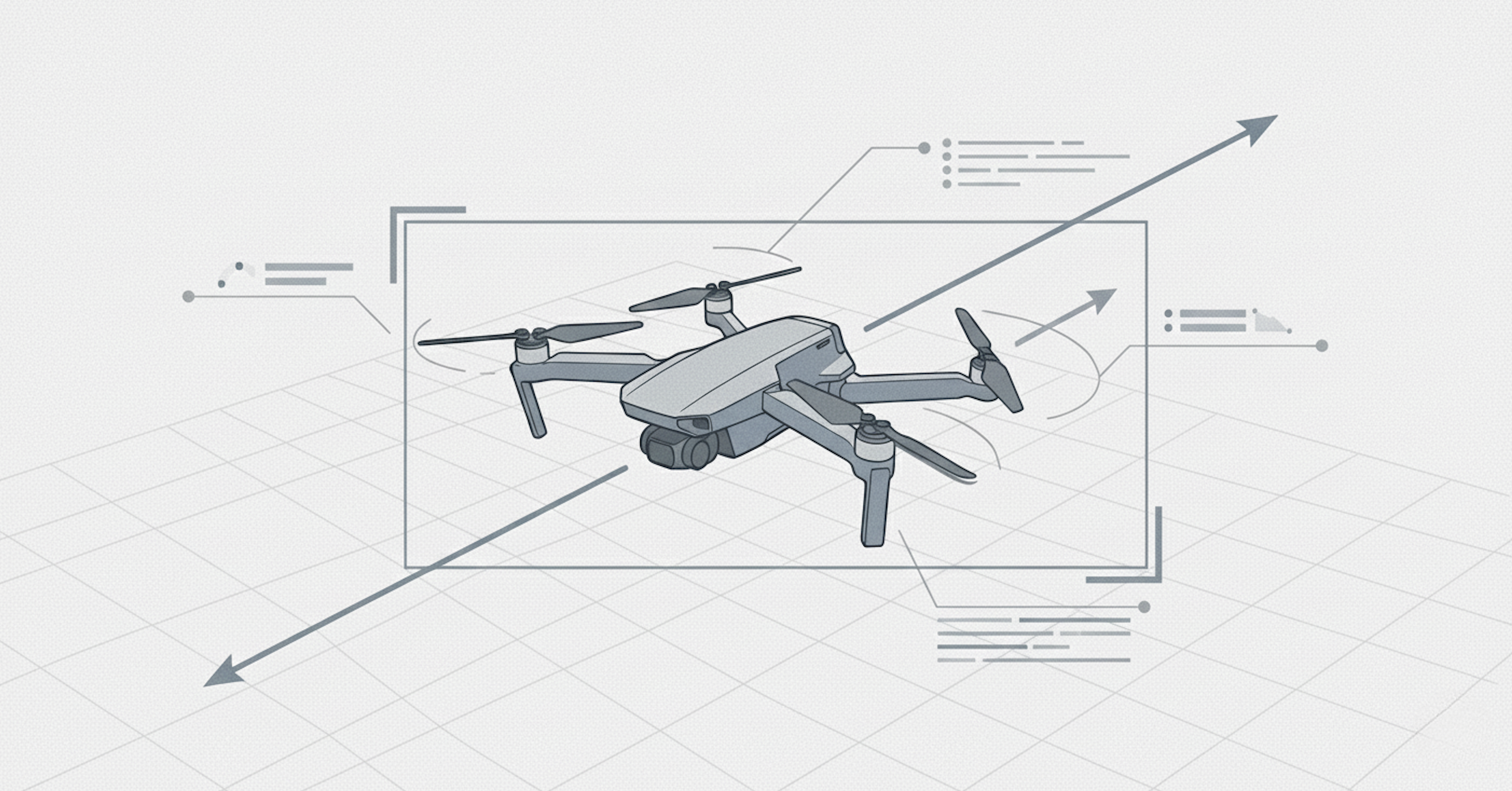

Innodata presents UAV tracking results on the Anti-UAV benchmark, demonstrating accuracy, robustness, and deployment-ready performance.

A practical guide to implementing AI TRiSM in agentic AI systems for secure, compliant, and trustworthy enterprise AI deployment.